ORNL Research Boosts Privacy, Security in Federated AI

April 29, 2026 — Researchers from the Department of Energy’s Oak Ridge National Laboratory (ORNL) have partnered with scientists from Argonne National Laboratory to develop a pair of complementary advances that make federated learning more secure, flexible and practical for large-scale scientific collaboration without requiring sensitive data to be shared.

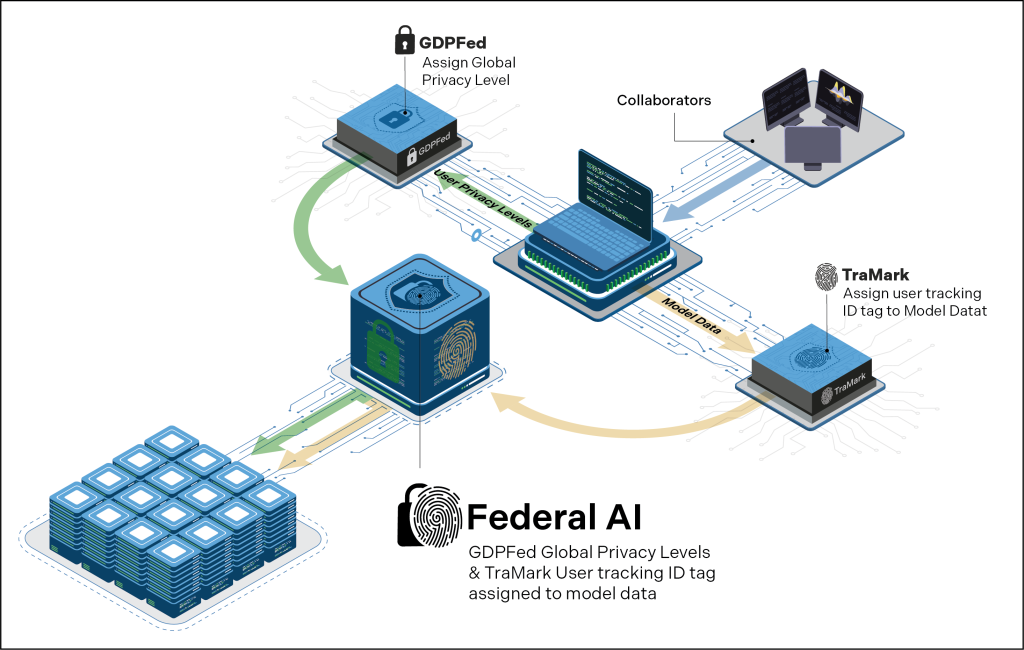

Federal AI architecture illustrating federated learning with integrated privacy and traceability controls. Credit: ORNL/U.S. Dept. of Energy.

Federated learning allows multiple organizations to collaboratively train artificial intelligence models while keeping data local, a capability increasingly important for science domains where data is sensitive, proprietary, regulated or simply too large to move. While the approach protects raw data, it introduces new challenges around privacy guarantees, trust and intellectual property protection. Researchers at ORNL have made significant progress in addressing these challenges through two related studies that together strengthen both data privacy and model accountability in federated systems.

“When we think about federated learning, especially in cross-institutional and cross-domain scenarios, we may expect that the clients or participants have different privacy preservation levels, either by preference or because of policies they’re required to follow. That was one of the main challenges we set out to address,” said Olivera Kotevska, a research scientist in computer science and mathematics at ORNL who co-authored the paper.

The first study introduces GDPFed and GDPFed+, or Group-based Differentially Private Federated Learning, new methods for federated learning when participants have different privacy requirements. In real-world collaborations, such as those spanning national laboratories, universities, industry partners and international facilities, not every participant can operate under the same privacy assumptions. Traditional privacy-preserving federated learning methods must enforce the strictest privacy level across all participants, which can add excessive noise to training and significantly degrades model accuracy.

GDPFed overcomes this limitation by grouping participants according to their privacy needs and applying client-level differential privacy at the group level rather than globally. This approach reduces unnecessary noise for participants with more relaxed privacy requirements while still providing rigorous guarantees for those that need stronger protection.

Building on this foundation, GDPFed+ further improves performance by combining model sparsification, or removing unimportant parameters that would otherwise accumulate noise, with optimal client sampling, which mathematically determines how often each group should participate in training. Extensive experiments show that GDPFed+ consistently delivers higher model accuracy than existing privacy-preserving methods under the same privacy guarantees.

The second study addresses model leakage and accountability. In federated learning, every participant receives a copy of the trained model, creating the risk that a model could be leaked, shared without authorization, or reused outside the collaboration. Existing watermarking techniques either require direct access to model parameters or cannot reliably identify which participant leaked a model.

To solve this problem, ORNL researchers developed TraMark, a server-side method for embedding traceable, black-box watermarks into federated models. TraMark assigns each participant a unique, invisible watermark embedded in a small, carefully isolated region of the model. These watermarks do not affect normal performance but allow the model owner to verify ownership and identify the source of a leak even when the model is only accessible through an application programming interface. Experiments show that TraMark preserves model accuracy while achieving near-perfect traceability across a range of datasets and attack scenarios.

“All the clients have a unique watermark, so it’s easy to trace which update is coming from which client and whether that client is approved in the federation,” Kotevska said. “This is very important in highly collaborative environments, especially when federations are open to new participants.”

Together, the two efforts form a defense-in-depth framework for federated learning. GDPFed and GDPFed+ protect participants’ data and privacy during training, while TraMark protects the resulting models after training by ensuring accountability and intellectual property protection. This combination is particularly valuable for cross-institutional science, where collaborators may have different privacy policies and where trust must be maintained over long-lived, evolving partnerships.

“I think both papers really complement each other,” Kotevska said. “One is about protecting the data and the other is about protecting the model. Together, they make federated learning much more practical for advancing science when data cannot be shared or moved.”

The researchers emphasize that both methods are domain-agnostic and scalable. They can be applied to image, text and scientific datasets; to small collaborations with just a few partners; or to large federations spanning leadership-class computing facilities and international institutions. The software implementations are designed to be reproducible and openly available, lowering barriers for adoption by the broader research community as federated learning becomes an essential tool for advancing science in areas like energy, national security and healthcare.

UT-Battelle manages ORNL for the U.S. Department of Energy’s Office of Science, the largest supporter of basic research in the physical sciences in the United States. The Office of Science is working to address some of the most pressing challenges of our time. For more information, visit energy.gov/science.

Source: Mark Alewine, ORNL

Related