Arm Holdings has unveiled the AGI CPU, a new chip designed to serve the booming market for AI inference and agentic AI workloads. The AGI CPU marks a first for Arm, as it’s the first silicon Arm is offering directly to customers in its 35-year history (as opposed to selling IP or full subsystems). The UK company, which also launched a reference design for AGI CPU-based servers, clearly is bullish on the chip’s potential to capture a share of the AI boom, as its CEO predicting the new chip will bring in $15 billion in revenue by 2031.

The new AGI CPU boasts some impressive stats. The chip, which Arm co-designed with Meta, is based on a chiplet design using TSMC’s 3nm N3P process. Each of the 136 Neoverse V3 cores runs at 3.5Ghz (or 3.7 Ghz in a dual-chip configuration) and sports 2MB of L2 cache per core. Each core provides 6GBps in memory bandwidth, while the chip as a whole can tap into 6TB in DDR5 RAM across 12 lanes per chip, delivering 800 GBps of aggregate memory bandwidth at 100 nanoseconds of latency or less.

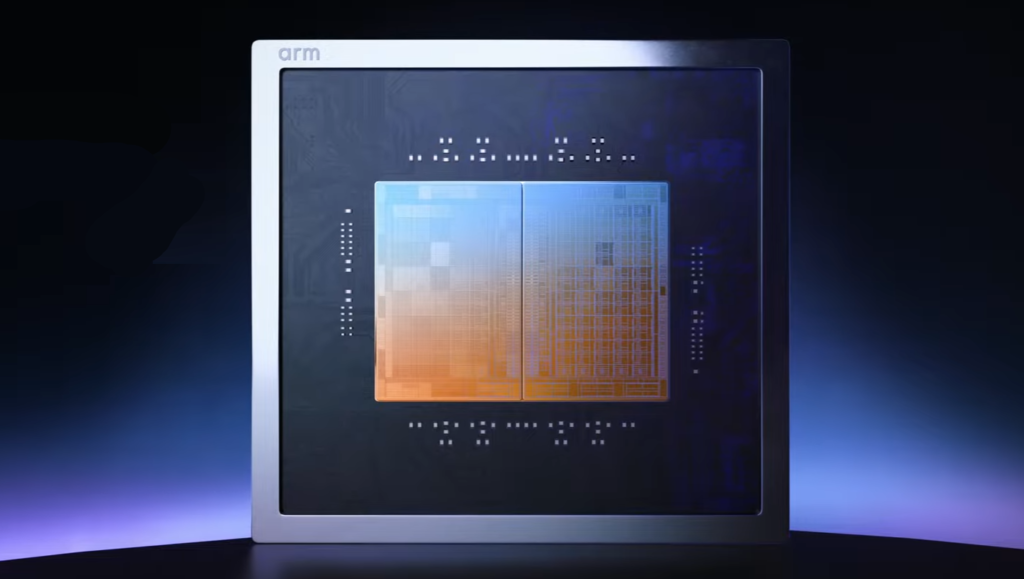

Arm AGI CPU blade for a rack-scale reference architecture

The new AGI chip features 96 lanes of PCIe Gen6 connectivity, and support for CLX 3.0 for memory expansion. Arm has bundled all of this within a 300-watt TDP. Arm is touting its new AGI chip’s memory bandwidth and per-thread performance, which it says will help customers meet emerging agentic AI workload requirements while staying within the energy budget.

The market for CPUs is hot at the moment, as the AI boom has increased demand for general-purpose chips that can handle a range of tasks that are required for AI inference and agentic AI. While powerful GPUs are favored for AI model training and the first stage of AI inference (called prefill), they are not ideal for the second stage of the AI CPUs (called decode), which requires a multitude of tasks to be completed, such as spinning up sandbox environments, running generated code, pulling data from the KV cache, processing SQL queries, and monitoring all these functions so they can be improved upon as part of the machine learning feedback cycle.

Recently at its GPU Technology Conference, Nvidia made a big deal out of Vera, its new 88-core ARM chip that its says delivers 1.5x the performance of standard X86 chips with 3x the memory bandwidth (which at 1.2 TBps per chip surpasses Arm’s new AGI CPU). Nvidia is also selling a full rack of Vera CPUs to handle tasks as part of its customers’ AI factory buildouts. It’s all part of Nvidia’s “inference king” economics.

Arm’s launch of the AGI CPU shows it’s also getting keen into “inference king economics.” The company, which traditionally has partnered with companies like Nvidia, Meta, Google, and Microsoft in the development of custom chips based on its ARM design, is now venturing forth into the chip business on its own.

Arm has also rolled out new reference server configurations for super-dense rack deployments. The first reference design is based on the Open Compute Project DC-MHS design and uses the two-chip AGI CPU configuration that supports up to 272 cores per blade. With up to 30 blades per 36kW air-cooled rack, the reference design delivers a total of 8,160 cores. Arm is also working with Supermicro on a liquid-cooled 200kW rack capable of housing 336 AGI CPUs for over 45,000 cores, the company says. At 1 gigawatt AI factory scale, that translates into $10 billion in capital expenditure savings compared to X86, Arm claims.

“Delivering AI experiences at global scale demands a robust and adaptable portfolio of custom silicon solutions, purpose-built to accelerate AI workloads and optimize performance across Meta’s platforms,” said Santosh Janardhan, Meta’s head of infrastructure. “We worked alongside Arm to develop the Arm AGI CPU to deploy an efficient compute platform that significantly improves our data center performance density and supports a multi-generation roadmap for our evolving AI systems.”

Arm is working with a range of other partners on the AGI CPU rollout, including Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom. Chipmaker Cerebras clearly sees its Wafer Scale Engine as the preeminent chip for AI inference, but it also recognizes the need for smaller CPUs to handle the range of other tasks needed for successful AI deployments.

Arm is working with a range of other partners on the AGI CPU rollout, including Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom. Chipmaker Cerebras clearly sees its Wafer Scale Engine as the preeminent chip for AI inference, but it also recognizes the need for smaller CPUs to handle the range of other tasks needed for successful AI deployments.

“These systems need purpose-built AI acceleration alongside efficient, scalable CPUs orchestrating data movement, networking, and coordination at scale,” said Cerebras CEO Andrew Feldman. “Extending the Arm compute platform into AGI-class infrastructure is a positive step for the ecosystem and for customers deploying AI at global scale.”

In a launch even in San Francisco on March 24, 2026, Arm CEO Rene Haas said sales of the new AGI CPU chip alone would bring in $15 billion to the Cambridge, UK-based company by 2031. Haas forecast that his company, which had about $4 billion in total revenue for 2024, would total $25 billion by 2031.

Haas predicted that emerging AI inference workloads would quadruple demand for CPUs in the foreseeable future. “We may be under-calling that number,” Haas said, according to a story in CNBC. “I think the demand is higher than we think it is.”

The day after the announcement of the new chip, Arm’s stock (NASDAQ: ARM), increased by 16%. The company currently has a market capitalization of $166.8 billion.

While the Arm AGI CPU will undoubtedly assist with customers’ agentic AI workloads, it likely won’t result in achieving artificial general intelligence (AGI) alone, as AGI is still considered by most AI experts to be decades away.

This article first appeared on HPCwire.

The post Arm Flexes with New Data Center CPU for AI Inference appeared first on AIwire.