Argonne’s Aurora Supercomputer Powers AI-Driven Fusion Disruption Prediction

March 3, 2026 — One of the fastest supercomputers in the world, Aurora is enabling new simulations of a powerful but elusive concept: fusion energy.

If you could build a star on Earth and harness its abundant energy, you’d need to know exactly how to store the star and keep it stable. Fusion energy scientists have been working for decades to make the idea a reality. They now have a potent new tool at their disposal: the Aurora supercomputer at the U.S. Department of Energy’s (DOE) Argonne National Laboratory.

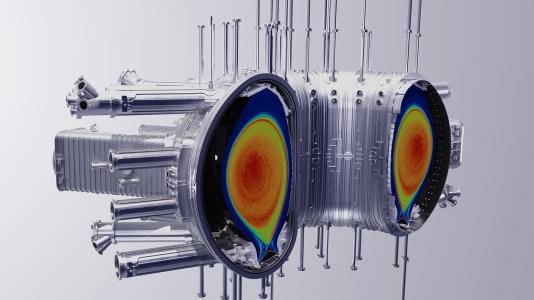

Tungsten density profile in the German tokamak ASDEX-U. Tungsten walls in devices like this can release tungsten particles into the core plasma, which helps researchers study plasma behavior. Image credit: ALCF Visualization and Data Analytics Team; CS Chang and Julien Dominski/Princeton Plasma Physics Laboratory.

Aurora was opened to scientists across the world in 2025 at the Argonne Leadership Computing Facility (ALCF), a DOE Office of Science user facility. It is among the three fastest computers in the world, capable of performing a quintillion (or a billion billion) calculations per second. That exascale supercomputing power makes Aurora a critical tool in solving grand scientific challenges such as fusion energy, quantum computing and protein design for targeted medicine, among others.

Fusion, in theory, would solve a lot of problems related to our growing need for energy. One of its main inputs, deuterium, can be derived from water. The fusion reaction is straightforward to extinguish, making it a safe prospect as a long-term energy source. Indeed, the reaction’s fragility is fusion energy’s primary hurdle — commercially viable power plants need a reliable, tunable resource. To get there, scientists are using Aurora to simulate conditions inside tokamaks, doughnut-shaped machines, which are one type of device where fusion happens.

Early Access to a World-Leading Supercomputer

When the Aurora supercomputer made its official debut early last year, scientists from various disciplines had already been using it through the Aurora Early Science Program. Projects chosen for the program had access to the machine for a few months before it was transitioned into production. The arrangement is mutually beneficial: Scientists got to optimize their code for Aurora, and the ALCF team got to troubleshoot based on the project teams’ experiences.

“These projects help to shake down and debug Aurora’s hardware and software,” said Tim Williams, deputy director of Argonne’s Computational Science division. “They get the earliest possible access to the complete system. As part of that, they help diagnose problems and get them fixed.”

The Early Science Program awarded pre-production computing time and resources to several projects under three categories within ALCF’s mission: simulation, data and learning. Two of those projects were fusion energy projects led by scientists from the DOE’s Princeton Plasma Physics Laboratory (PPPL), William (Bill) Tang and Choongseok (C.S.) Chang.

“Historically, fusion energy has been at the vanguard of use of high performance computing,” Williams said. “And here at the ALCF, there has always been a presence of fusion energy research, because its complexity makes it so computationally intensive.”

Predicting Fusion Energy Disruptions with AI

To achieve fusion energy requires creating an environment where two atomic nuclei join together and form one heavier nucleus, releasing energy in the process. This happens within plasma, a heated, electrified gas. Stars, including the sun, are examples of fusion energy at work.

Scientists have simulated this process in experimental, plasma-filled tokamaks such as the DIII-D National Fusion Facility, a DOE Office of Science user facility in California. But they have not yet created a machine that can reliably deliver large amounts of energy.

Magnetic fields within the tokamak form a sort of “bottle” that confines the plasma. Understanding the tokamak’s inner workings involves simulating the flow of plasma energy and mass — an endeavor that is both well established and enduringly difficult. Computational fluid dynamics simulations aren’t confined to plasma: Research at Argonne and elsewhere has explored how the movement of air and liquids plays into hypersonic flight, engine efficiency, weather trends and other phenomena.

“Even just regular fluids are a very complicated scientific problem that we use supercomputers for. When they become turbulent, it’s very chaotic, and it’s hard to predict what they’ll do,” said Argonne assistant computational scientist Kyle Felker. “In tokamaks, we’re complicating this by adding magnetic fields and trying to bring this magnetic fluid up to extreme conditions that don’t occur anywhere on Earth.”

The temperature of the tokamak under construction at ITER, an international collaboration in France, will reach 150 million degrees C (302 million degrees F). That is ten times hotter than the center of the sun. Disruptions to the tokamak plasma, such as the formation of magnetic islands, can damage the reactor and snuff out the fusion reaction.

Felker is working with Tang, principal research physicist at PPPL, on a project that uses artificial intelligence (AI) to predict when disruptions in a tokamak are likely. Trained on data from experiments in machines such as DIII-D and the Joint European Torus in the United Kingdom, the code can assign a “disruption score” to indicate the likelihood of an imminent disruption, doing so within milliseconds.

“We have lots and lots of data from historical campaigns. So we can take AI to learn what we can about these instabilities and hopefully avoid them entirely,” Felker said.

In this case, AI is being called on to meet a challenge partially driven by AI itself. The rise of algorithm-based tools such as ChatGPT has driven up demand for processing power, and therefore energy, worldwide.

“You really have to have more power to have the next-generation machines continue to produce the discoveries that AI has such a tremendous potential to deliver for you,” Tang said. “That’s another major role for what we do in trying to deliver clean fusion energy through magnetic confinement methods.”

Looking Ahead to France’s ITER

Chang, managing principal research physicist at PPPL, leads research focused on fundamental edge plasma physics — what happens when the plasma meets its magnetic boundary. Simulating problems at the plasma edge can help prevent serious confinement issues in the future ITER tokamak.

The kinds of simulations Chang wants to do in a perfect world are not possible, even with an exascale computer like Aurora.

“The bigger the computers, the better,” he said. “The equation we’re trying to solve in such a big machine like ITER is at least five-dimensional, with several tens of charge-state ion species represented by many trillions of mathematical particles. For that, we may need 10 exascale computers.”

To solve for this limitation, Chang whittles down problems as he can. One aspect of his project is examining the effect of tungsten particles that break away from the tokamak wall. Tungsten, even in tiny quantities, can radiate enough energy out of the tokamak to shut it down. And there are dozens of permutations of tungsten to account for — again, impossible on just one exascale machine. So Chang’s team has devised a strategy to bundle similar tungsten species together and simulate them that way on Aurora.

Another aspect of Chang’s project aims to analyze the risk of plasma damaging the ITER-size tokamak’s divertor plates, which remove exhaust heat from the system — and how impurities such as tungsten and neon might have a mitigating effect.

Aurora will make it possible to do calculations for his project in a matter of hours, rather than the several days it might have taken on previous computers. Chang noted that, beyond the processing speed, Aurora’s high-capacity memory makes it newly possible to conduct higher-fidelity simulations than before. (At 20.4 petabytes, Aurora’s memory is more than 40 times that of previous supercomputers.)

“Once we understand the basic physics of these problems in a tokamak, then we can be creative and find a way to manage them,” Chang said.

ALCF’s Broad Reach

The private sector also relies on Argonne’s computing resources. Scientists at TAE Technologies, a California-based fusion energy company, used ALCF supercomputers to develop its magnetic fusion plasma confinement devices.

“Simplistic simulations can miss a lot of details,” Jaeyoung Park, a lead scientist at TAE, recently told Hewlett-Packard Enterprise. “The ALCF gave us the computational power to look at these things at a much deeper level and gain a better understanding, as well as identify ways to make our systems work better.”

Another company, Tennessee-based Type One Energy, is using DOE computing resources, including ALCF’s Polaris, to hatch a new physics design basis for a pilot power plant. The work was done through DOE’s Innovative and Novel Computational Impact on Theory and Experiment (INCITE) program, which allots ALCF production supercomputer time to researchers who are working on computationally intensive projects that address “grand challenges” in science and engineering.

Now that the Aurora Early Science Program has come to a close, both Chang and Tang are continuing their fusion energy research at the ALCF through INCITE awards.

Tang noted that even with the best supercomputers, fusion energy still needs talented scientists across disciplines.

“These machines are not going to do the work for you. They are powerful tools, but they also tell you what you don’t know,” he said. “As long as we stay focused and have bright, young scientists engaged in fusion energy, then I’m very hopeful for the future.”

More from HPCwire: Argonne Releases Aurora Exascale Supercomputer to Researchers

Source: Christina Nunez, Argonne National Laboratory

Related