PNNL: Robotics and AI Power Biotechnology Advances

March 19, 2026 — By optimizing microorganisms that could bolster production of high-value fuels, chemicals, and materials, Pacific Northwest National Laboratory (PNNL) researchers are compressing the time from lab experiments to commercial biotechnology readiness.

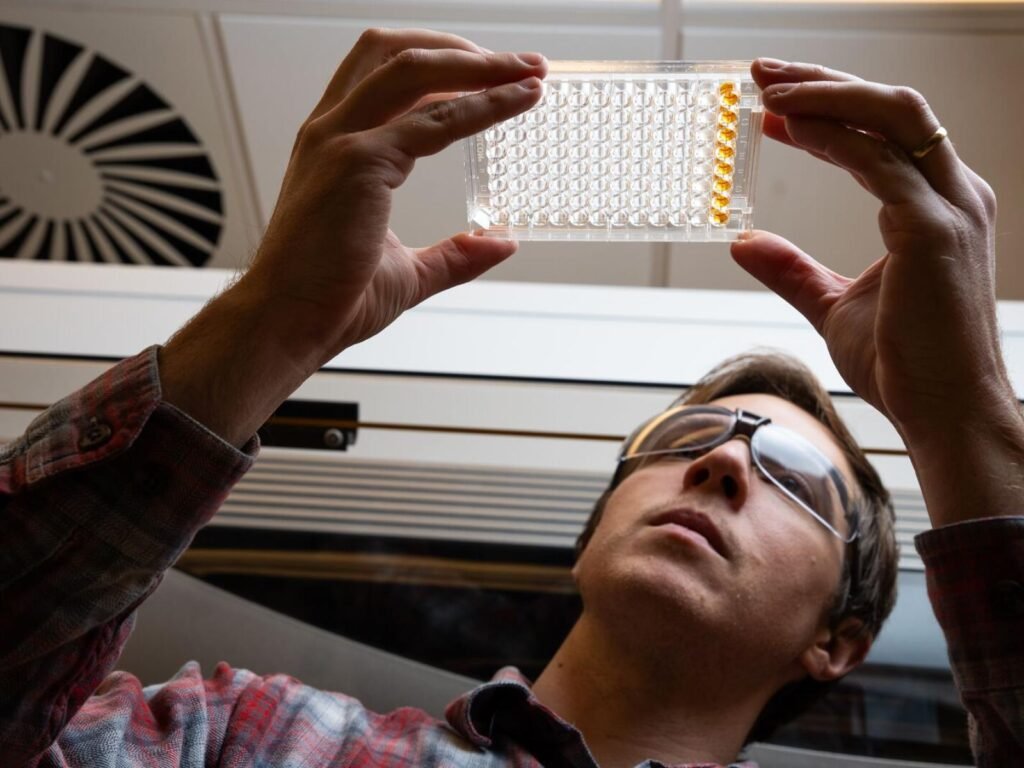

Microbiologist Bram Stone observes bacterial growth in an automated liquid handling system, which has been paired with AI to optimize growth conditions. Photo credit: Andrea Starr, PNNL.

The acceleration has been made possible by integrating AI and high-throughput automation into existing research programs that are harnessing microorganisms to bolster production of high-value fuels, chemicals, and materials.

A Test Case for AI and Automation in Experimental Design

Researchers Bram Stone and Joonhoon Kim have trained an open-source AI platform to focus on growth boundaries of microbial systems—that is, the point at which they either thrive or stop growing. They reasoned that an AI algorithm trained to analyze important growth conditions could help them narrow the search space for optimized ones exponentially faster than if they used traditional experimental approaches. Stone, a microbiologist, and Kim, a computational scientist, combined their expertise to modify BacterAI, which was originally created by a team at the University of Michigan led by Paul Jensen.

Jensen created BacterAI to accelerate infectious disease research using robotics, genomics, and computation to design new therapies. BacterAI operates on binary responses to data inputs, indicating either the presence (yes) or absence (no) of an ingredient. This approach is effective for experiments where presence or absence is the only information researchers seek, but to use the AI for investigating the growth or demise of microorganisms, scientists at PNNL had to change the algorithm to account for a range of growth conditions in experimental designs.

Stone called the AI training process “search and learn.”

Rather than asking the AI whether an ingredient is present, he said, the model now explores concentration ranges—1, 2, or 3 grams within 10 liters, and so on—proposing small, incremental changes to ingredient levels and learning from simulated outcomes.

“The computer runs through simulations for each step until it makes a prediction that growth will stop. And that’s the point where we know we need to delve deeper and perform more detailed analyses of our microbes,” said Stone. Exploring concentration ranges is critical to identifying the growth boundaries of a microorganism.

PNNL has made a large investment in decoding and predicting the ways that complex biological systems interact with their environments and help shape them. The ability to predict and engineer such systems is behind the burgeoning field of predictive phenomics. PNNL’s internal investment, called the Predictive Phenomics Initiative (PPI), is funding Stone and Kim’s project, as well as 10 more.

Optimizing Conditions for Industrial Scale Growth

Bioprocess scientist Jeff Czajka leads another PPI project and is also wrestling with the challenge of maintaining productivity of the microbes during the transition from lab-scale to industrial-scale tanks.

Czajka is conducting experiments with a versatile yeast called Yarrowia lipolytica that is used to produce biofuels, oils, and flavorings. By varying environmental conditions in 2-liter lab-scale bioreactors, Czajka aims to maintain the organism’s growth and production rate at industrial quantities.

This year, Czajka is partnering with Stone and Kim, contributing data and experimental conditions—such as pH, temperature, oxygen, and nitrogen—that will be used as inputs for the AI to optimize experimental design.

“We’ll combine the environmental conditions of a bioreactor with microorganisms in individual wells, allowing us to control the variables while simultaneously running many experiments,” said Stone. “We’re really excited to get started and look forward to comparing our data with Czajka’s experiments in the bioreactors.”

Moving to High-Throughput Automation with Tecan

In the coming months, Stone and Kim plan to integrate the modified AI model with a new Tecan Fluent automated liquid handling system, coupled to a fluorescence plate reader and incubator, to increase experimental throughput.

Stone plans to input 13 experimental variables into the AI, which will generate many thousand distinct experiments. After simulating these experiments, AI will pare the list to 300 key experiments. The Tecan system will then use a robotic arm to prepare 12 acrylic plates the size and shape of a postcard, each with 96 tiny wells representing 25 experiments done in triplicate and including several types of control experiments to assure automated data quality. The Tecan executes the experimental protocol, incubates the plates for two days, and then generates the data that Stone subsequently feeds back into the AI model for further refinement.

In just 18 minutes, the AI suggests another set of 300 experiments based on what it learned from the data produced in the previous batch.

“As humans, we wouldn’t be able to analyze data from 300 experiments in only 18 minutes and design the next set of experimental parameters,” he said. With this level of efficiency, researchers could realistically conduct 900 experiments per week while simulating new experiments in the parameter space of several billion experiments. Previous research has shown that 10,000 to 20,000 experiments can provide enough data to accurately represent a microorganism’s behavior in a system.

Stone said they’re also using the AI and automation systems to investigate a hearty soil bacterium called Pseudomonas putida that can break down waste streams and produce chemicals.

A specific strain of P. putida, he explained, naturally produces nitrogen during growth, yet dies when exposed to excessive amounts of it. By simulating incremental additions of ingredients, such as nitrogen, trace minerals, or salts to the microorganism’s growth mixture, the AI agent will search for the point at which these increments stop the strain from growing. These critical parameters form the basis for the next round of physical experiments.

“AI and automation are enabling us to understand growth boundaries at a faster rate, exponentially accelerating the time from lab discovery to commercialization. The faster we can get scientific solutions to industry, the greater impact we have on driving down costs,” said Stone.

The integration of AI and automation into biological experimentation aligns with PNNL’s broader Genesis Mission effort to accelerate scientific discovery using computing intelligence and power.

The updated BacterAI algorithm developed by Stone and Kim will be made available in the PPI’s open-source repository on GitHub, with original design credit to Jensen’s team.

Source:

Related