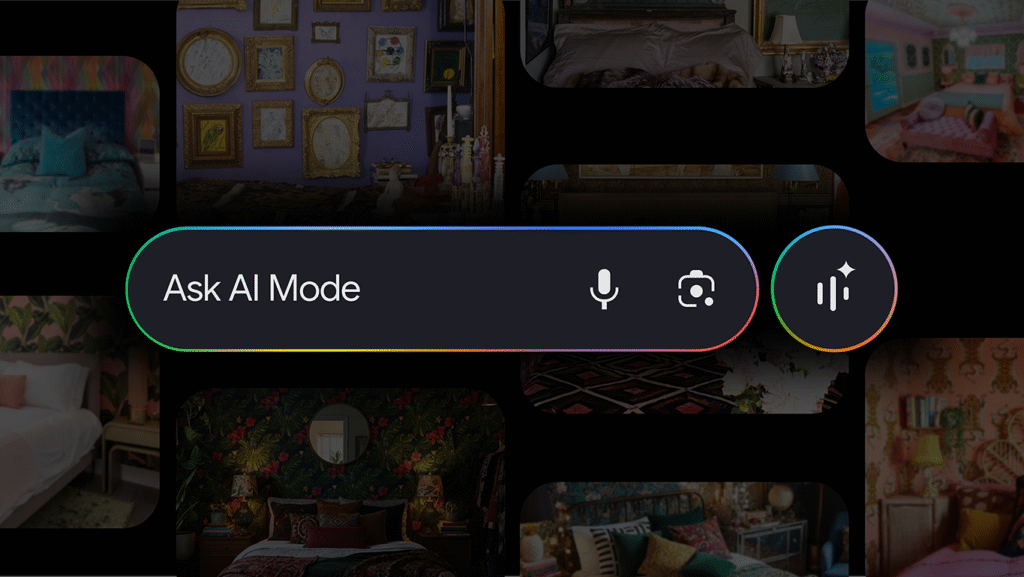

Google recently announced that it’s bringing over a new update to AI Mode in Google Search, which will now give users access to a wider range of visual results simply by speaking or showing the platform what they’re looking for. Powered by Gemini 2.5’s advanced multimodal and language capabilities, the new update uses visual understanding technologies like Lens, and is now rolling out in English for users in the U.S. starting this week.

READ: Google Chat Refine Feature Uses Gemini AI to Perfect Your Messages

Google says that the new update is built on a new “visual search fan-out” technique, which greatly enhances Google’s ability to understand images. This allows AI Mode to perform a comprehensive analysis of a photo by recognizing subtle details and secondary objects in addition to the main subject. By running multiple queries in the background, the system then gains a full grasp of the visual context and the user’s natural language question for more relevant results.

With the new feature, users can begin a search with specific details (i.e. clothing styles, interior design inspirations, etc), and AI Mode will return more relevant visuals based a user’s query. The search can be continuously refined in a more conversational approach, with back-and-forth dialogue including follow-up requests.

As it is multimodal in nature, users can also start a search by uploading or snapping a photo, and any images provided will come with a link to the source. Furthermore, users on mobile devices can search within a specific image and ask conversational follow-up questions about what they are seeing.

For shopping, users can describe what they are seeking in a more causal manner, instead of manually setting filters to fit specific criteria. AI Mode will then provide a set of options available on the market, and users can further give additional details on what they’re looking for, such as product colour, pricing, and more.