Microsoft has unveiled Maia 200, the latest iteration of its in-house chip for powering AI workloads. Maia 200 sports some impressive stats, including 10 petaflops FP4 capacity and 216GB of HBM3, and gives Microsoft and its Azure cloud an immediate boost in AI token generation. More importantly, it gives Microsoft some bragging rights over the in-house developed AI accelerators from AWS and Google Cloud.

Microsoft says Maia 200 is its first chip designed specifically to address the challenges of AI performance. In addition to raw number-crunching horsepower, AI inference demands lots of high-speed memory, with fast links between the memory and the processors. Maia 200 appears to deliver on both fronts.

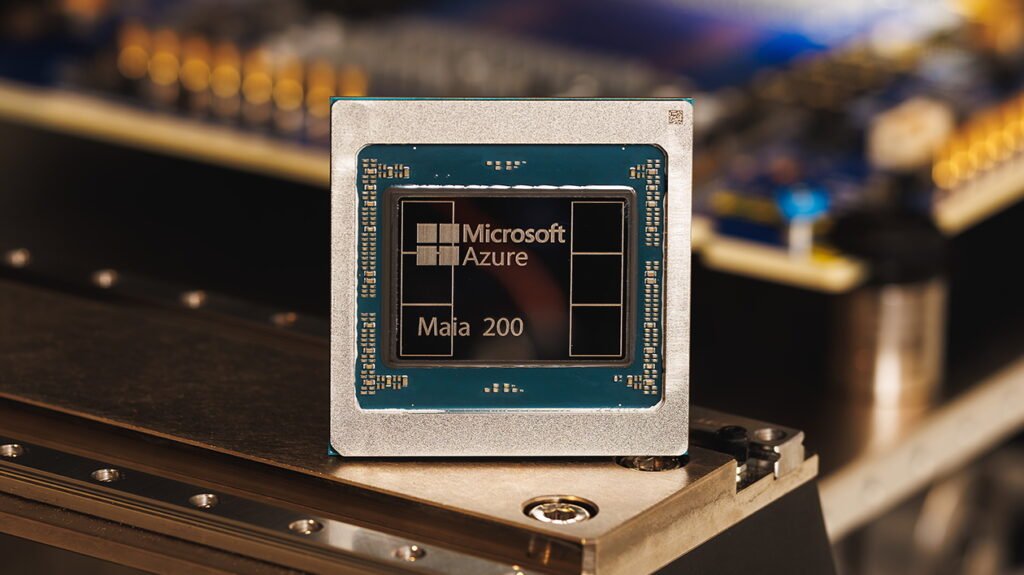

(Source: Microsoft)

The Maia 200 was developed with TSMC’s 3 nm process and delivered in a 750-watt thermal design power (TDP) envelope. At the center of the Maia 200 lay two execution engines, including a Tile Tensor Unit (TTU) for high-throughput matrix multiplication and convolution using in FP8, FP6, and FP4 resolutions, while a Tile Vector Processor (TVP) for SIMD (Single Instruction, Multiple Data) instructions provides FP8, BF16, and FP32 processing.

The TTU and TVP execution engines are connected to 216GB of high-bandwidth memory (HBM3) as well as 272MB of on-die TSRAM (tile static random-access memory). The Maia 200 features a Direct Memory Access (DMA) subsystem that keeps the data flowing between TSRAM and the TTUs, as well as a small Tile Control Processor (TCP) to orchestrate work between the TTU and the DMA.

A defining characteristic of the Maia200 architecture is its abundant memory and memory hierarchy, according to the deep dive on the new Maia 200 written by Saurabh Dighe, CVP, System & Architecture and Artour Levin, VP, AI Silicon Engineering, in the Azure Engineering Blog. “This substantial on‑die memory resource enables a wide range of low‑latency, bandwidth‑efficient data‑management strategies,” they write. “Both CSRAM and TSRAM are fully software‑managed, allowing developers–or the compiler/runtime–to deterministically place and pin data for precise control of locality and movement.”

The Maia 200 chip features an on-die Ethernet network interface card (NIC) that delivers 2.8 TB per second of bi-directional bandwidth with adjacent chips. According to Dighe and Levin, Maia 200 employs a “two-tier, scale-up” topology that combines an Ethernet-based scale-up interconnect to deliver high-bandwidth, low-latency communication across clusters containing up to 6,144 accelerators.

Microsoft says it can connect up to 6,144 Maia 200 accelerators in a “two-tier, scale-up” cluster topology (Image courtesy Microsoft)

The tile-level processing power of Maia 200, when combined with the DMA and network-on-chip (NoC) capabilities, enables the chip to reach broad scales demanded by today’s massive AI workloads, according to Dighe and Levin.

“The DMA engines are architected for multichannel, high-bandwidth transfer and support 1D/2D/3D strided movement, enabling common ML tensor layouts to be moved efficiently between on-chip SRAM, HBM, and external interfaces while overlapping data movement with compute,” they write. “Meanwhile, the NoC provides scalable, low-latency communication across clusters and memory subsystems and supports both unicast and multicast transfers–an important capability for distributing tensor blocks and coordinating parallel execution.”

It’s been just over two years since Microsoft rolled out Maia 100, its first-generation AI accelerator designed specifically for AI inference. Developed on TSMC’s 5-nanometer process, the Maia 100 delivered 1.8 TB per second of bi-directional memory bandwidth and 64 GB of SRAM. It offered 3.2 petaflops of MXFP4 performance and 1.6 petaflops of FP8 or MXInt8 performance, or about one-third of the Maia 200.

Maia 200’s capabilities line up well with other top-line AI accelerators, and make it “an AI inference powerhouse,” says Scott Guthrie, the executive vice president for cloud and AI at Microsoft. “In practical terms, Maia 200 can effortlessly run today’s largest models, with plenty of headroom for even bigger models in the future,” he writes in a blog.

Maia 200 is “the most performant, first-party silicon from any hyperscaler, with three times the FP4 performance of the third generation Amazon Trainium, and FP8 performance above Google’s seventh generation TPU,” Guthrie continued. “Maia 200 is also the most efficient inference system Microsoft has ever deployed, with 30% better performance per dollar than the latest generation hardware in our fleet today.”

Maia 200 lines up well against in-house chips from other hyperscalers (Source: Microsoft)

Maia200 runs in air-cooled and water-cooled environments. It was designed to work with Azure’s third‑party GPU fleet and adheres to standards for rack, power, and mechanical architecture. It’s integrated into Azure’s native control plane, which Microsoft says makes deployments and serviceability non-events, while existing peacefully with other AI accelerators in the same data center footprint.

Microsoft plans to use its Maia 200 chips to run a variety of models, including the latest OpenAI GPT-5.2 models. It will also be used for generating synthetic data to be used by AI models for training purposes.

The new chip is currently deployed in Microsoft’s Central datacenter region near Des Moines, Iowa. It will be deployed next in the US West 3 datacenter region near Phoenix, Arizona, with future regions to follow.

This article first appeared on HPCwire.

The post Microsoft Raises the AI Inference Bar with Maia 200 appeared first on AIwire.