NextSilicon has shared some internal benchmark results for its latest AI and HPC chip, the Maverick-2, and the claims are nothing if not bold. The company claims that its new chip, which is now shipping, can virtually rewire itself to adapt to changing workloads, thereby delivering 10x the computational performance of the latest Nvidia GPUs, but while consuming just 60% of the electricity.

Trillions of dollars are being spent to build massive AI factories loaded with the most powerful GPUs. The latest Nvidia Blackwell GPU, the standard-bearer for high-performance computing, will offer up to 20 petaflops of FP4 performance. However, Blackwell also will consume up to 1,400 watts of electricity, which is changing how data centers are powered and cooled.

The folks at NextSilicon envision a different path. While GPUs and CPUs have helped power great scientific and societal breakthroughs in the fields of HPC and AI, they are facing a future of diminishing returns. Instead of continuing down the same path, which is to spend great sums to build ever-bigger AI factories loaded with ever-more-powerful GPUs (as well as more advanced power and cooling systems), NextSilicon’s founders decided to try a different path.

NextSilicon Founder and CEO Elad Raz noted that, while the 80-year-old Von Neumann architecture has given us a universally programmable foundation for computing, it comes with a lot of overhead. He says that 98% of silicon is dedicated to controlling overhead tasks, such as branch prediction, out-of-order logic, and instruction handling, while only 2% of the chip is doing the actual computation at the heart of the application.

Chips built with the traditional Von Neumann architecture waste up to 98% of their capacity on overhead tasks, NextSilicon says (Image source: NextSilicon)

Raz and his team conceived of a new architecture, dubbed Intelligent Compute Architecture (ICA), whereby the chip can essentially reconfigure itself to adapt to the changing workload, thereby keeping the amount of overhead to a minimum and maximizing the computing horsepower available for doing the math behind demanding AI and HPC applications. This was the basis for NextSilicon’s patent, titled “Runtime Optimization for Reconfigurable Hardware,” and the guiding principle for its non-Von Neumann dataflow architecture used in the Maverick-2 processor.

Raz explained the core insight behind NextSilicon’s chip design philosophy during a media presentation earlier this week.

“We have seen that for compute intensive applications, a small portion of the code runs the majority of the time,” Raz said. “Instead of running every piece of code, every instruction the same way, we thought, let’s focus on what matters. We developed a smart software algorithm that continuously monitors your application. It identifies precisely which code path runs the most, and it reconfigures the hardware on the chip to accelerate exactly those code paths. And we do this all at run-time, in nanoseconds. The result: Efficient performance at scale for the most critical workloads, freeing you to focus on innovation, not overhead.”

NextSilicon’s dataflow architecture is built atop a graph structure. Instead of processing instructions one by one, a la Von Neumann, the dataflow processor consists of a grid of computational units, dubbed ALUs, that are interconnected in a graph structure. Each ALU handles a certain type of function, such as multiplication or logical operations. When input data arrives, the computation is automatically triggered, and the result flows to the next unit in the graph.

NextSilicon CEO Elad Raz (Image courtesy NextSilicon)

This novel approach brings a big advantage compared to serial data processing, since the chip no longer needs to handle data fetching, decoding, or scheduling, which are overhead tasks that eat up compute cycles.

“For parallel workloads, which describes virtually all modern AI and HPC applications, dataflow’s ability to exploit natural parallelism in the computational graph means hundreds of operations can execute simultaneously, limited only by data dependencies rather than artificial instruction serialization,” Raz writes in a white paper released today as part of the Maverick-2 announcement.

This approach gives the Maverick-2 the flexibility and performance often found in an ASIC, but without the long and expensive development timeline, according to NextSilicon. In terms of programmability, the Maverick-2 is compatible with Cuda, allowing the chip to function as a drop-in replacement for Nvidia GPUs, the company says. Python, C++, and Fortran code will also run without rewrites, the company says.

“We get 10x faster performance at half of the power consumption. And because we have a drop-in replacement, we can penetrate the market much more quickly,” Raz said during the presentation. “Our technology seamlessly runs CPU and GPU supercomputing tasks, HPC workloads, and advanced AI machine learning models all effortlessly out of the box.”

Maverick-2 feeds and speeds (Image courtesy NextSilicon)

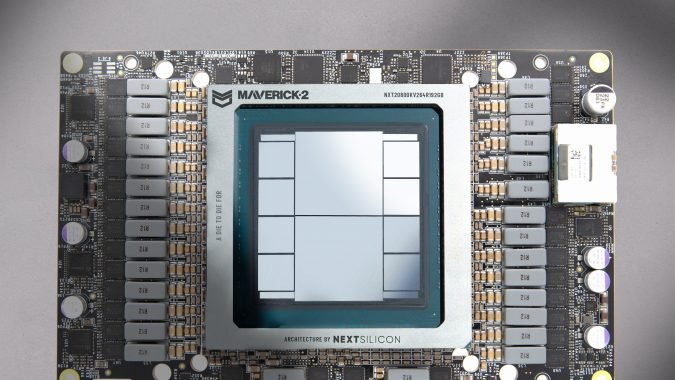

Maverick-2 comes in both single-die and dual-die configurations. The single-die Maverick-2 features 32 RISC-V cores that are built on TSMC’s 5nm nanometer process and run at 1.5GHz. The card supports PCIe Gen5x16, features 96GB of HBM3E memory, and delivers 3.2TB per second of memory bandwidth. It sports 128MB of L1 cache system, features a 100 Gigabit Ethernet NIC, operates within a thermal design power (TDP) of 400W, and is air-cooled. The dual-die Maverick-2 effectively doubles all of these capabilities, but it plugs into the OAM (OCP Accelerator Module) bus, features dual 100 GbE NICs, can be air or liquid-cooled, and operates in with a TDP of 750 watts.

NextSilicon shared some internal benchmark figures for Maverick-2. In terms of Giga Updates Per Second (GUPS), the Maverick-2 was able to deliver 32.6 GUPS at 460 watts, which it says is 22x faster than CPUs and nearly 6x faster than GPUs. Maverick-2 delivered 600 GFLOPS at 750 watts in the HPCG (High-Performance Conjugate Gradients) category, which it said is comparable to leading GPUs, at half the power.

“What we discussed in detail today is more than a chip,” said Eyal Nagar, NextSilicon’s vice president of R&D. “It’s a foundation, a new way to think about computing. It opens a whole new world of possibilities and optimizations for engineers and scientists.”

Maverick-2 ICA (Image courtesy NextSilicon)

NextSilicon’s focus on optimizing resources makes sense, said Steve Conway, HPC and AI industry analyst at Intersect360 Research. “Traditional CPU and GPU architectures are often constrained by high-latency pipelines and limited scalability,” Conway stated. “There is a clear need for reduced energy waste and unnecessary computations within HPC and AI infrastructures. NextSilicon addresses these important issues with Maverick-2, a novel architecture purpose-built for the unique demands of HPC and AI.”

Maverick has already been deployed at Sandia National Lab as part of the Vanguard-2 supercomputers and will be used with the upcoming Spectra supercomputer. “Our partnership with NextSilicon, which began over four years ago, has been an outstanding example of how the NNSA laboratories can work with industry partners to mature novel emerging technologies,” stated James H. Laros III, Vanguard program lead, Sandia National Laboratories. “NextSilicon’s continued focus on HPC made them a prime candidate for the Vanguard program.”

Computing platforms shift every 10 to 15 years, said Ilan Tayari, a NextSilicon co-founder and VP of architecture, and each transition unlocks new categories of problems. We saw this as the world moved from mainframes to PCs, from client-server to cloud and from CPU to GPU.

“Each transition made previously impossible applications turn into something routine,” Tayari said. “But here’s the thing: Each transition happened because the previous platform hit fundamental limits on the problems it could solve. The next transition will allow scientists to reach beyond what is possible today.

“Imagine biologists researching cancer using molecular simulations across thousands of human cells. Picture astronomers exploring dark matter with unprecedented granularity across galaxies to uncover fundamental properties of the universe. Consider accurately forecasting natural disasters like floods, hurricanes and forest fires,” he said. “Our vision to liberate us from the confines of computation has never been more critical than it is now.”

This article first appeared on HPCwire.

Related

AI,ASIC,Elad Raz,Eyal Nagar,GPU,ICA,Ilan Tayar,inference,Intelligent Compute Architecture,Maverick-2,RISC-V,von Neumann architecture