Nvidia Launches Ising Open Models for Quantum Calibration and Error Correction

Quantum computers remain limited by noise, instability, and the challenge of error correction in real time. Nvidia’s latest answer is Ising, a new open model family introduced Tuesday that is designed to bring AI-driven control to quantum hardware.

The Ising family includes models for quantum processor calibration and error-correction decoding, two of the current bottlenecks in scaling quantum systems. The models are designed to interpret measurement data, calibrate quantum hardware, and process errors fast enough to support real-time correction, tasks that are currently handled through a mix of human-guided calibration and classical decoding algorithms.

According to Nvidia, the Ising family has debuted with two model types:

- Ising Calibration: A vision language model that can rapidly interpret and react to measurements from quantum processors. The company says this enables AI agents to automate continuous calibration and reduces the time needed from days to hours.

- Ising Decoding: Two variants of a 3D convolutional neural network model, optimized for either speed or accuracy, to perform real-time decoding for quantum error correction. Nvidia claims Ising Decoding models are up to 2.5x faster and 3x more accurate than pyMatching, the current open source industry standard.

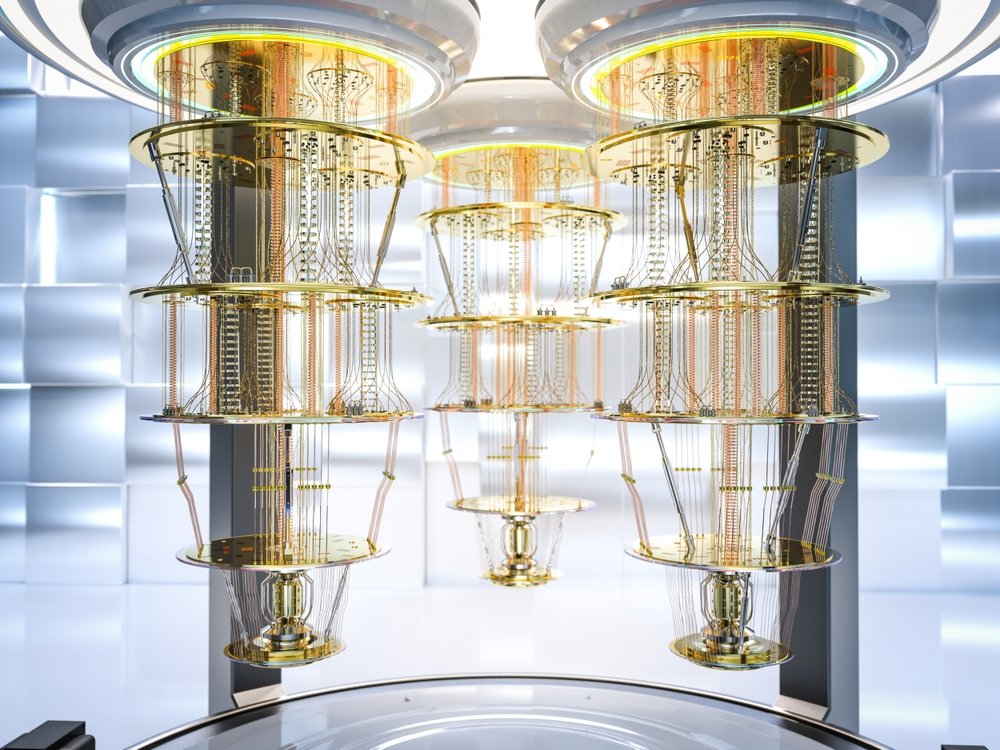

(Shutterstock)

In a press briefing, Nvidia described Ising Calibration as a 35-billion-parameter vision-language model trained to interpret measurement data and automate the full calibration workflow. Conventional approaches rely on physicists or predefined calibration workflows to tune systems before each run, but Ising Calibration is designed for continuous recalibration as hardware drifts over time. Nvidia said the approach is intended to scale to much larger systems, where manual calibration becomes impractical as qubit counts move from the hundreds into the thousands and beyond.

For error correction, Nvidia introduced Ising Decoding as a complementary layer rather than a replacement for existing methods. The model acts as a pre-decoder that uses neural networks to process syndrome data, or the error signals derived from qubit measurements, and correct a large portion of errors before passing the processed syndromes to traditional algorithms like pyMatching. This hybrid approach is meant to improve speed and accuracy while remaining compatible with existing error-correction pipelines. Nvidia said the models operate at microsecond timescales, fast enough to support real-time correction across multiple qubit modalities.

Nvidia also explained how the models are meant to scale with system size. In the briefing, Nvidia said its decoding approach can generalize as systems scale, without requiring retraining. The company demonstrated the models at code distances up to 31, which is a metric tied to how well a system can suppress errors and a key step toward large, fault-tolerant quantum machines. That corresponds to a regime associated with hundreds to thousands of physical qubits per logical qubit, depending on the error-correction scheme, placing the work closer to the scale targeted in current quantum roadmaps.“Today, the very best quantum processors make an error about once in every 1000 operations, which is amazing, but to become useful accelerators for scientific and enterprise problems, that number needs to become one in a trillion or even less,” said Sam Stanwyck, Nvidia’s director of quantum product, in the briefing. “The good news is that AI can be the answer for how you manage that noise at scale, and it has the potential to enable very rapid progress in closing that gap.”

(sakkmesterke/Shutterstock)

In the press briefing, Stanwyck explained how calibration and error correction are “AI-shaped problems,” meaning they involve high-throughput, real-time data processing well suited to GPU-accelerated AI workloads. The goal is to integrate these models into hybrid quantum-classical systems, where GPUs handle control, decoding, and optimization alongside the quantum processor.

“This is the path to quantum GPU supercomputing, which is a quantum accelerator, integrating with the GPU supercomputer, solving valuable problems,” he said.

An important part of Nvidia’s strategy with Ising is that the models are fully open, including training data, frameworks, and workflows for fine-tuning and deployment. The company says a shared foundation is needed more than ever in quantum computing, where hardware architectures, noise characteristics, and error-correction methods vary widely across systems.

“Developers can fine-tune these for their specific hardware and noise characteristics, follow our recipes to integrate these with their agents, use our frameworks to train their own open models, or build on our research to do their own,” Stanwyck said. “It’s everything you need to make this capability yours. And quantum teams have been building this kind of tooling and capability in-house, but now there’s an open foundation they can all build on.”

Nvidia said Ising Calibration is already being used by a range of hardware and research organizations, including Atom Computing, IonQ, IQM Quantum Computers, and the Lawrence Berkeley National Laboratory’s Advanced Quantum Testbed. Ising Decoding is also being evaluated by universities and national labs, including the University of Chicago, UC Santa Barbara, Sandia National Laboratories, and Yonsei University.

(Credit: Nvidia)

The company expects the Ising family to expand over time, with future models potentially addressing other parts of the quantum computing stack, such as circuit optimization, noise characterization, and system-level control. In the short term, Stanwyck said adoption will likely be uneven, as calibration is more immediately applicable than large-scale error correction, depending on where hardware teams are in their roadmaps.

“There are plenty of quantum builders, not to mention quantum research groups, who aren’t yet ready to tackle error correction at scale,” Stanwyck said. “That may be a little bit more gated by quantum hardware roadmaps before Ising Decoding is useful, and I’d expect that Ising Calibration, once it can be fine-tuned for different types of calibration processes that different quantum processor builders need, will be a lot more universally useful, at least right away.”

Stanwyck said Nvidia’s larger goal is to accelerate progress toward practical quantum systems.

“We’ve made everything open because we expect this to be a new baseline, where every quantum builder can use these with the ecosystem to make progress together,” he said. “What we’re hoping for with this is that our AI leadership is going to directly accelerate the path to useful quantum computers. The same GPUs that are running the world’s AI can run the control plane for quantum hardware.”

Related